Mitom là kênh phát sóng bóng đá số 1 trên thị trường hiện nay, nhận được sự quan tâm truy cập từ hàng triệu người dùng. Theo dõi livestream tại hệ thống này, anh em không chỉ có thể xem một cách miễn phí mà còn đảm bảo chất lượng hình ảnh full HD, âm thanh sinh động và nhiều tính năng hữu ích.

Mitom live phát sóng bóng đá ra đời như thế nào?

Trước khi tìm hiểu những tính năng hữu ích và những lợi ích hấp dẫn của hệ thống này, cùng tìm hiểu sơ lược về mục đích ra đời cũng như các giai đoạn phát triển của hệ thống này.

mitomtv phải cạnh tranh với nhiều “ông lớn” khi xuất hiện

Thời điểm mì tôm tv mới xuất hiện trên thị trường đã có rất nhiều kênh livestream bóng đá khác nhau. Lúc bấy giờ môn thể thao bóng đá đã vô cùng phổ biến trên thị trường, vì vậy có nhiều hệ thống ra đời để đáp ứng nhu cầu xem thể thao của người hâm mộ.

Khi mitom tv ra đời, trên thị trường đã có nhiều hệ thống nổi tiếng, thu hút số lượng người dùng lớn. Trong đó nổi tiếng nhất là xoilac với nhiều năm tồn tại trên thị trường. Những tính năng của hệ thống này nhận được đánh giá tính cực của người hâm mộ.

Do đó để bóng đá mì tôm có thể phát triển được như hiện nay, những người trong ban điều hành đã phải nỗ lực rất nhiều mới có thể cạnh tranh và thu hút mọi người truy cập vào hệ thống. Trải qua nhiều năm không ngừng cố gắng và nỗ lực, giờ đây mitom truc tiep bong da đã có thể khẳng định được vị thế của mình. Thậm chí mitom net có thể sánh ngang được với vị thế của những “ông lớn” khác đã xuất hiện từ lâu trên thị trường.

Mục đích ra đời của hệ thống mì tôm tv như thế nào?

Như đã chia sẻ ở nội dung trên, thời điểm mà mitom.tv live xuất hiện trên thị trường thì đã có nhiều kênh phát sóng khác nhau. Tuy nhiên, lý do những người trong ban điều hành quyết định ra mắt hệ thống của riêng mình vì nhận thấy những kênh livestream đang tồn tại có những nhược điểm nhất định.

Với vai trò của một fan hâm mộ thể thao, chính bản thân những người này đã truy cập vào các hệ thống tồn tại trên thị trường thời điểm đó. Tuy nhiên vì các kênh phát sóng đó không thể đáp ứng tốt nhất nhu cầu sử dụng của họ nên những người này với niềm đam mê thể thao mãnh liệt đã quyết định góp vốn, đầu tư để mitom live ra đời.

Mục đích ra đời của kênh livestream bóng đá này chỉ đơn giản là để có thể thỏa mãn đam mê của những người dùng có niềm yêu thích với trái bóng tròn. Không chỉ vậy, chúng tôi còn mong muốn tất cả mọi người đều có thể theo dõi phát sóng bóng đá một cách miễn phí, không tốn bất cứ khoản phí nào để được thỏa mãn đam mê thể thao.

Trong tương lai, mitom live muốn trở thành hệ thống hàng đầu châu Á

Đây chính là mục tiêu hướng đến của hệ thống phát sóng bóng đá này trong thời gian từ 5 – 10 năm tiếp theo. mi tom tv mong muốn có thể đem dịch vụ của mình đến với nhiều người dùng đến từ nhiều quốc gia hơn.

Do đó những người trong ban điều hành đã không ngần ngại đầu tư khoản ngân sách lớn để ứng dụng những công nghệ hiện đại, tân tiến hơn. Từ đó có thể nâng cấp chất lượng dịch vụ của mình để thỏa mãn nhu cầu sử dụng của nhiều người hơn, thu hút được nhiều người dùng hơn.

Mitom net hi vọng có thể trở thành hệ thống phát sóng bóng đá đầu tiên ở Việt Nam có thể phát triển đến toàn châu Á. Từ đó góp một phần công sức to lớn để đưa đất nước Việt Nam có thể “sánh ngang với cường quốc năm châu”.

Giờ đây hệ thống này vẫn đang không ngừng cố gắng để có thể hiện thực hóa mục tiêu đó. Hãy đón chờ những bước phát triển mới ấn tượng hơn của kênh livestream bóng đá truc tiep bong da mitom!

Mitom live đáp ứng mọi nhu cầu của người dùng trên hệ thống

Không phải ngẫu nhiên mà hệ thống của chúng tôi được đánh giá là uy tín và đáng tin cậy nhất trên thị trường hiện nay. Truy cập vào hệ thống này, anh em sẽ được thỏa mãn đam mê của bản thân. Không chỉ vậy, đây còn là một kênh bách khoa toàn thư có thể giúp người dùng đáp ứng tốt nhất mọi nhu cầu của bản thân.

Livestream bóng đá chất lượng đỉnh cao

Đây là tính năng cơ bản nhất của hệ thống phát sóng bóng đá này nhưng cũng là chức năng quan trọng nhất. Trên trang web này, anh em có thể theo dõi mọi trận đấu bóng đá có quy mô khác nhau đến từ mọi quốc gia trên hành tinh.

mì tôm tv có thể đáp ứng tốt nhất nhu cầu theo dõi bóng đá của đông đảo mọi người dùng với hàng trăm giải bóng đá mà chúng tôi có bản quyền.

mitom tv tích hợp Livescore bóng đá

Trong thời gian theo dõi phát sóng bóng đá, anh em có thể nắm bắt được mọi con số xuất hiện để thuận tiện trong việc tổng kết, không cần phải ghi nhớ. Đó là nhờ tính năng livescore hiện đại cho những người trong ban điều hành đã tích hợp vào hệ thống của mình.

Nhờ đó, kết quả thi đấu bóng đá sẽ được cập nhật nhanh chóng trong thời gian thực, gần như ngay lập tức khi tình huống diễn ra và nhận được đánh giá của trọng tài. Những người dùng trên hệ thống của truc tiep bong da mitom đều đánh giá đây là tính năng hữu ích nhất, giúp quá trình theo dõi bóng đá trở nên ấn tượng hơn.

Theo dõi lịch thi đấu trên hệ thống mì tôm tv

Giờ đây anh em có thể chủ động hơn trong việc theo dõi những trận thi đấu bóng đá hấp dẫn. Nhờ được cung cấp đầy đủ thông tin về thời gian, bao gồm cả ngày và giờ nên anh em có thể sắp xếp công việc và cuộc sống cá nhân để theo dõi những trận đấu đỉnh cao nhất.

Không chỉ nắm bắt được những thông tin sơ lược về thời gian diễn ra trận đấu mà anh em còn được website cung cấp những tin tức sơ lược khác. Từ đó giúp fan hâm mộ có cái nhìn tổng quan nhất về trận thi đấu sắp diễn ra.

Cụ thể, những thông tin mà anh em được chúng tôi cung cấp có thể kể đến như: đội hình ra sân dự kiến, thành tích thi đấu của mỗi đội trong những trận gần đây tại khuôn khổ giải đấu đang tham gia, lịch sử đối đầu trước đó,…

Nắm bắt kết quả bóng đá chính xác nhất

Theo thông tin mà trang web của chúng tôi thống kê được thì mọi người dùng trên hệ thống của mình đều truy cập vào chuyên mục này. Thời gian đầu chúng tôi phát triển chuyên mục này để đáp ứng nhu cầu của những người dùng đã bỏ lỡ trận thi đấu bóng đá mà họ yêu thích. Từ đó giúp họ có thể nắm bắt được thông tin tổng quan nhất, hình dung những tình huống đã diễn ra trong trận đấu.

Tuy nhiên trên thực tế, ngay cả những người đã dành thời gian theo dõi trọn vẹn 90 phút của trận đấu thì vẫn sử dụng tính năng này. Lý do là vì có thể bạn đã bỏ quên mất thông tin quan trọng nào đó và muốn kiểm tra lại; Hoặc cũng có thể bạn muốn xem lại những thước phim highlight do mì tôm trực tiếp bóng đá tích hợp trên hệ thống về những tình huống đã xảy ra.

Điều chỉnh bảng xếp hạng giải đấu nhanh chóng

Dựa vào bảng xếp hạng bóng đá, anh em sẽ hình dung được bức tranh tổng quan nhất về mùa giải năm đó. Theo chia sẻ của giới chuyên môn thì người hâm mộ sẽ thích theo dõi những giải bóng đá mà bảng xếp hạng có biến động lớn.

Điều đó chứng tỏ giải đấu đó có sức hấp dẫn, kịch tính, các câu lạc bộ tham gia tranh tài đều thi đấu hết mình để có thể giành được thứ hạng cao. Không chỉ vậy, dựa vào những thông tin có được tại chuyên mục này, anh em sẽ biết đội bóng mà mình yêu thích đang có phong độ thi đấu như thế nào, khoảng cách với chiếc cúp vô địch còn bao xa.

Thời gian đầu, chuyên mục này có những thông tin khá đơn giản, chỉ bao gồm tên của câu lạc bộ cũng như vị trí mà họ đang có trên bảng xếp hạng. Nhưng cho đến thời điểm hiện nay, mì tôm tv đã phát triển tính năng của mình tốt hơn với nhiều thông tin hữu ích hơn.

Cụ thể, một số thông tin mà chúng tôi sẽ cung cấp cho anh em khi truy cập vào hệ thống này như số điểm tích lũy, số trận đã tham gia, số trận thắng/trận hòa/trận thua,…

Bảng tỷ lệ kèo của bóng đá mì tôm uy tín và đáng tin cậy

Với anh em bet thủ thì đây là tính năng được truy cập đông đảo nhất, được mệnh danh là trợ thủ đắc lực nhất. Vì mitom truc tiep là kênh phát sóng bóng đá miễn phí, hệ thống hoạt động phi lợi nhuận và không hợp tác với bất cứ nhà cái nào.

Do đó tất cả thông tin, những con số được hiển thị đều cam kết là sản phẩm của hệ thống siêu máy tính. Chúng tôi không can thiệp vào những con số đó để lừa đảo người dùng hay định hướng bet thủ tham gia vào bảng kèo nào. Từ đó đảm bảo anh em có thể thỏa mãn đam mê thể thao, tham gia cá cược bóng đá một cách an toàn nhất.

Đó cũng là lý do vì sao trên thị trường hiện nay có nhiều trang web cung cấp tính năng bảng tỷ lệ kèo nhưng hệ thống của mitom tv vẫn thu hút nhiều người hâm mộ truy cập nhất.

Bóng đá mì tôm đem đến cho người dùng những trải nghiệm đỉnh cao

Không chỉ đem đến cho người dùng những tính năng thú vị mà anh em còn có những trải nghiệm theo dõi bóng đá đỉnh cao. Theo dõi những thông tin dưới đây để biết được lý do vì sao ra đời sau nhưng hệ thống của trực tiếp bóng đá mì tôm nhanh chóng vươn lên vị trí số 1 trong lĩnh vực livestream bóng đá!

Hình ảnh trên video sắc nét, chuyển động mượt mà

Tất cả các giải thi đấu bóng đá trên hệ thống đều có chất lượng hình ảnh đạt chuẩn Full HD. Lý do là vì chúng tôi mua bản quyền của mọi giải thi đấu bóng đá lớn nhỏ đến từ nhiều nơi trên thế giới. Không chỉ vậy, với việc ứng dụng những công nghệ hiện đại nhất, chúng tôi cam kết sẽ làm sắc nét mọi hình ảnh, đem đến cho bạn trải nghiệm đỉnh cao nhất.

Do đó dù anh em là người dùng khó tính nhất cũng cảm thấy hài lòng với những trải nghiệm hình ảnh mà mì tôm tv đem lại.

Chất lượng đường truyền ổn định, không bị lag hay giật

Một ưu điểm nổi bật tiếp theo của mitom tv được người dùng đánh giá cao đó là tốc độ đường truyền Internet. Chất lượng đường truyền ổn định, cam kết luôn mượt mà, nói không với tình trạng bị trễ hay lag giật,…

Có một sự thật đó là trong thời gian trận đấu diễn ra, từng khoảnh khắc xuất hiện trên sân cỏ đều vô cùng kịch tính và hấp dẫn. Bởi vì điều đó có thể giúp một câu lạc bộ lật ngược thế cờ, trở thành người chiến thắng trận tranh tài.

Hiểu được điều đó nên những người trong ban điều hành đã thuê đội ngũ nhân sự chuyên nghiệp nhất để đảm bảo tốc độ đường truyền tốt nhất. Từ đó có thể đáp ứng tốt nhất nhu cầu theo dõi bóng đá của mọi người.

Giao diện hệ thống ấn tượng, các chuyên mục được sắp xếp khoa học

Để đáp ứng tốt nhất nhu cầu theo dõi phát sóng bóng đá của tất cả mọi người, hướng đến việc tất cả fan hâm mộ ở mọi lứa tuổi đều có thể truy cập vào hệ thống của mì tôm trực tiếp bóng đá để sử dụng thì những người trong ban điều hành đã cân nhắc việc thiết kế giao diện hệ thống.

Ngay từ giây phút đầu tiên truy cập vào hệ thống, anh em sẽ thấy ấn tượng vì màu sắc của giao diện vô cùng độc đáo. Không chỉ vậy, các tính năng trên hệ thống được sắp xếp ở vị trí khoa học, cân nhắc rõ ràng để bất cứ ai cũng có thể tìm kiếm và sử dụng. Nếu bạn là người không am hiểu về công nghệ thì cũng không cần quá lo lắng vì có thể sử dụng hệ thống một cách thành thạo ngay từ lần đầu tiên truy cập.

Cung cấp tính năng bình luận trực tiếp trên livestream

Trong thời gian theo dõi phát sóng bóng đá, để tăng sự sôi nổi trong các phòng livestream, mì tôm tv đã tích hợp thêm tính năng bình luận. Từ đó bạn có thể thoải mái chia sẻ suy nghĩ cảm xúc, suy nghĩ của mình với những người dùng khác có cùng đam mê.

Nhờ cộng đồng người dùng văn minh nên anh em không cần lo lắng tình trạng như bị xúc phạm, bị phản bác,… Mọi người đều sẽ lắng nghe và tôn trọng ý kiến của bạn, có thể cùng bàn luận với bạn để tạo bầu không khí ấn tượng hơn.

Đội ngũ bình luận viên của mitomtv chuyên nghiệp

Việc lắng nghe những thông tin chia sẻ của các bình luận viên không chỉ giúp bạn hiểu hơn về các tình huống diễn ra trên sân cỏ, bạn còn có thể phát triển những vốn kiến thức của anh em về môn thể thao yêu thích.

Nhờ vào những thông tin hữu ích do các chuyên gia chia sẻ, anh em sẽ cảm thấy rất dễ hiểu vì chúng tôi sử dụng những từ ngữ đơn giản nhất, những vấn đề chuyên môn đều trở nên gần gũi với mọi người.

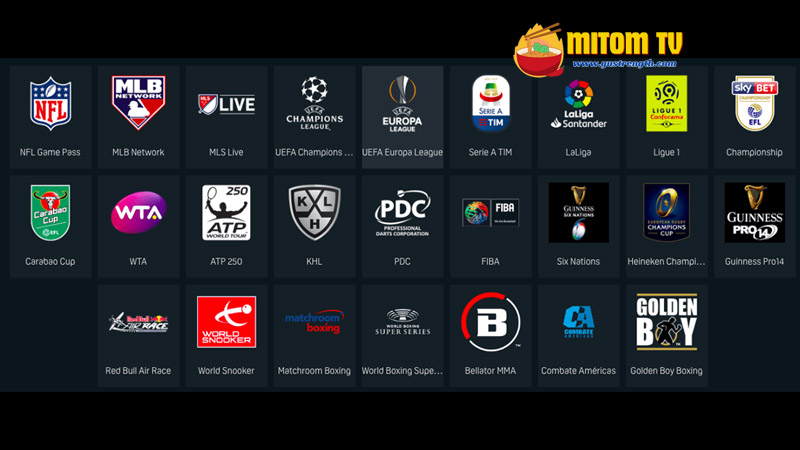

Hơn 999+ giải bóng đá có bản quyền để người dùng khám phá

Mặc dù là hệ thống phi lợi nhuận, không thu phí của người dùng nhưng những người trong ban điều hành cũng không ngần ngại đầu tư, mua bản quyền của hàng trăm giải thi đấu bóng đá khác nhau. Anh em không chỉ theo dõi phát sóng bóng đá của những giải đấu uy tín nhất trên thị trường hiện nay như Ngoại hạng Anh, Cup C1 Châu Âu, World Cup, EURO,… Người dùng còn có thể theo dõi nhiều giải bóng đá khác nhau, có quy mô giao hữu, những giải bóng cỏ trên thị trường hiện nay.

Gần như mọi khung giờ trong ngày truy cập vào hệ thống, anh em đều có thể theo dõi một trận đấu. Do đó sẽ không có tình trạng buồn chán vì phải truy cập vào trực tiếp bóng đá mì tôm rồi thoát ra.

Cập nhật đầy đủ mọi tin tức liên quan đến môn thể thao vua

Không chỉ cung cấp những tính năng hiện đại liên quan đến phát sóng bóng đá mà chúng tôi còn cung cấp cho anh em những tin tức xoay quanh môn thể thao mà bạn quan tâm. Từ đó dù nằm ở nhà thì anh em cũng có thể hình dung được bức tranh tổng quan nhất về tình hình phát triển môn bóng đá.

Trên chuyên mục Tin tức của mì tôm tv, anh em có thể theo dõi mọi tin tức nóng hổi nhất và có độ chính xác cao. Tất cả những thông tin mà trang web này cung cấp có thể kể đến như tin tức về thời gian tổ chức giải đấu, những thay đổi về quy luật, cúp vô địch, giải thưởng, thông tin về sự biến động của câu lạc bộ như đội hình, chuyển nhượng cầu thủ, tình hình khó khăn về tài chính, đời tư của cầu thủ hoặc huấn luyện viên,…

Đội ngũ biên tập viên và phóng viên của mitom tv còn có mặt ở mọi nơi trên thế giới và thực hiện những bài phỏng vấn độc quyền về vấn đề mà người dùng của mình quan tâm. Và đây cũng có thể nói là ưu điểm nổi bật, chứng minh chúng tôi quan tâm đến nhu cầu fan hâm mộ của mình.

Những giải bóng đá đỉnh cao đang được mitom livestream

Với hệ thống hơn 999+ giải đấu, anh em sẽ được thỏa mãn đam mê theo dõi bóng đá của mình. Trong đó có những giải đấu thu hút đông đảo người theo dõi nhất trên hệ thống của chúng tôi, có thể kể đến như:

Giải thi đấu World Cup hàng đầu thế giới

Giải bóng đá hàng đầu thế giới này thu hút sự quan tâm của hàng tỷ người hâm mộ trong mỗi mùa giải được đăng cai tổ chức. Đội tuyển quốc gia nào đăng cai lên ngôi vị vô địch giải đấu này có thể khẳng định được họ chính là nhà vô địch thế giới.

Vì vậy nên mọi quốc gia tham gia vào giải bóng đá này của FIFA đều vô cùng nỗ lực, cố gắng qua từng giải đấu để có thể giành được quyền tham gia vào vòng trong. Từ đó tiến đến gần hơn với chiếc cúp vô địch danh giá, mang vinh quang về với đất nước của mình.

Hiểu được nhu cầu theo dõi của người dùng, mitomtv 1 đã mua bản quyền của mọi trận bóng đá trong khuôn khổ giải đấu này, từ vòng loại đến vòng chung kết. Do đó hãy truy cập vào hệ thống của chúng tôi để có thể thỏa mãn đam mê của bản thân.

Bản quyền giải bóng đá EURO trên hệ thống mitomtv

UEFA EURO cũng là một giải bóng đá hấp dẫn đang nhận được sự quan tâm của nhiều người dùng. Đây là giải bóng đá cấp cao hàng đầu châu Âu dành cho những đội tuyển quốc gia tại châu lục già.

Những trận đấu trong khuôn khổ giải bóng đá này đều vô cùng hấp dẫn, đem đến cho anh em những trải nghiệm kịch tính nhất. Còn chần chờ gì mà không truy cập ngay vào hệ thống của chúng tôi để có thể theo dõi miễn phí mọi trận đấu đang diễn ra.

Mitom 5 phát sóng mọi trận đấu tại giải Ngoại hạng Anh

Mặc dù là giải bóng đá thuộc khuôn khổ cấp quốc gia nhưng Premier League lại thu hút được sự quan tâm của người hâm mộ trên khắp thế giới. Đây là giải đấu bóng đá hàng đầu trong bảng xếp hạng những giải bóng đá cấp quốc gia, đã tồn tại từ lâu trên thị trường những vẫn khẳng định được sự uy tín và hấp dẫn của mình.

Theo dõi giải Cúp C1 Châu Âu cùng người dùng mitomtv 1

Nhắc đến những giải bóng đá hấp dẫn nhất trên hệ thống của mitom tv live thì không thể bỏ qua UEFA Champions League, tên tiếng Việt là giải Cúp C1 Châu Âu. Giải đấu này được tổ chức định kỳ mỗi năm một lần và có sự tham gia tranh tài của các câu lạc bộ nổi tiếng hàng đầu châu Âu.

Chiếc cúp danh giá chính là mục tiêu cuối cùng của các đội bóng đang thi đấu tại đây, tạo nên những trận tranh tài vô cùng căng thẳng và kịch tính.

Giải bóng đá hàng đầu Tây Ban Nha La Liga

Tây Ban Nha cũng là một quốc gia phát triển môn thể thao vua với những câu lạc bộ nổi tiếng khắp thế giới như Real Madrid, Barcelona,… Nếu anh em là fan hâm mộ của những câu lạc bộ này thì còn chần chờ gì mà không truy cập vào hệ thống của mitom 5 để theo dõi những trận đấu đỉnh cao nhất của giải vô địch quốc gia La Liga.

Mitom bong da mua bản quyền mọi giải đấu hàng đầu Đức

Đây cũng là một giải bóng đá hấp dẫn mà người dùng không thể bỏ qua khi truy cập vào hệ thống livestream bóng đá của mitomtv 1. Với Bundesliga, anh em sẽ được theo dõi những trận đối đầu kịch tính nhất, những pha ghi bàn ấn tượng và độc đáo từ các cầu thủ ngôi sao đang tranh tài trong khuôn khổ giải bóng đá này.

Những giải bóng đá cấp cao khác tại hệ thống website của mitom tv live

Ngoài những giải đấu đã được chúng tôi chia sẻ ở nội dung trên, anh em còn có thể theo dõi những giải bóng đá khác cũng chuyên nghiệp và hấp dẫn không kém như giải Serie A, SEA Games, UEFA Europa League, AFC Champions League, V-League, Mexico Liga MX, giải vô địch bóng đá quốc gia Nhật Bản, Copa America hay cúp bóng đá Nam Mỹ, giải vô địch quốc gia Pháp Ligue 1, Asian Cup, giải vô địch bóng đá Đông Nam Á,…

Q&A – Giải đáp những thắc mắc thường gặp

Dưới đây là một số thắc mắc có thể bạn cũng quan tâm và đang cần được trực tiếp bóng đá mitom giải đáp. Cùng theo dõi nội dung dưới đây ngay!

Theo dõi trên hệ thống mì tôm bóng đá có phải trả phí không?

Câu trả lời là không, hệ thống của chúng tôi cung cấp dịch vụ hoàn toàn miễn phí đến với tất cả mọi người. Do đó anh em không cần trả phí và cũng không cần cung cấp bất cứ thông tin gì cho trang web này mà cũng có thể sử dụng mọi tính năng trên hệ thống.

Link phát sóng bóng đá của mitom bong da có an toàn không?

Câu trả lời là có, mọi đường link được bong da mitom cung cấp trên trang web chính thức của mình đều đã được kiểm tra kỹ lưỡng. Do đó anh em có thể yên tâm không chứa mã độc hay tiềm ẩn nguy hiểm cho thiết bị của mình như virus, bị hacker tấn công và lấy trộm thông tin,…

Lời kết

Trên đây là tất cả thông tin mà mì tôm bóng đá muốn chia sẻ với anh em liên quan đến kênh phát sóng bóng đá của chúng tôi. Hi vọng qua những thông tin đó, người dùng đã có cái nhìn tổng quan nhất về hệ thống này và tin tưởng truy cập vào hệ thống của chúng tôi để có những trải nghiệm livestream bóng đá đỉnh cao nhất.

Mỗi lượt truy cập của anh em vào hệ thống này đều là một sự ủng hộ, động viên lớn dành cho trang web. Từ đó thúc đẩy tinh thần cống hiến hết mình cho đam mê trái bóng tròn của những người trong ban điều hành trực tiếp bóng đá mitom , ngày càng phát triển hệ thống của mình hơn nữa!